# Infrastructure for AI: Vector Database Deep Dive

The backbone of modern AI isn't just the model—it's the infrastructure that stores and retrieves the model's knowledge.

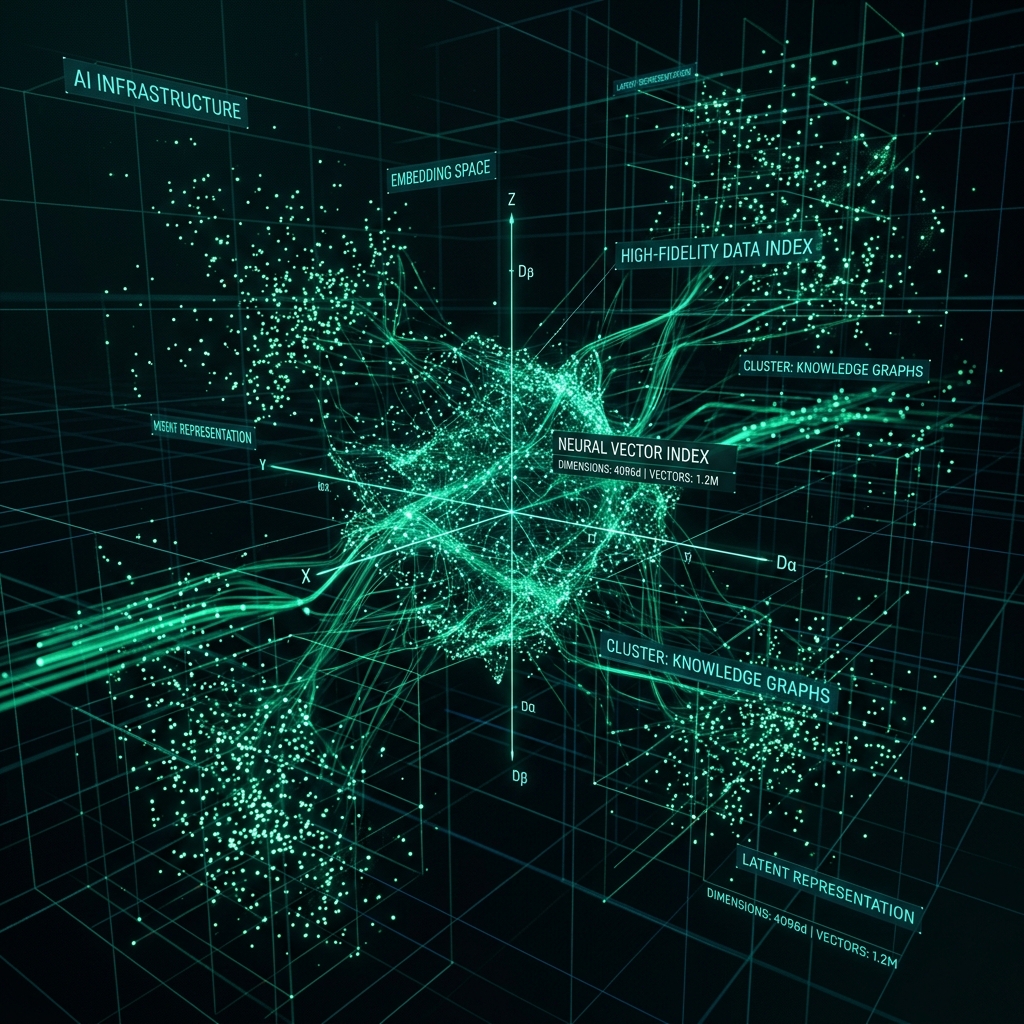

## 1. The Physics of Vector Space

A vector database doesn't store words; it stores coordinates in a high-dimensional space (often 768 to 3072 dimensions).

### Dimensionality and Fidelity

* **768 Dimensions**: Efficient, good for general search.

* **3072 Dimensions**: High-fidelity. Necessary for complex architectural schemas where subtle differences in terminology change the entire meaning.

## 2. Indexing: The HNSW Algorithm

Searching through millions of vectors one-by-one is impossible. **HNSW (Hierarchical Navigable Small World)** is the industry standard for fast retrieval.

It builds a "graph" where the AI can hop between nodes to find the neighborhood of the correct answer in milliseconds.

## 3. Metadata Filtering & Security

In a SaaS environment, you cannot mix data from User A and User B. Vector databases handle this via **Metadata Filtering**.

We attach IDs and tags to every vector, ensuring that the database-level query only "sees" the data the current user is authorized to access.

## 4. Hardware Acceleration

Vector math (dot products and cosine similarity) is perfect for GPUs. Modern infrastructure uses specialized hardware or optimized software libraries (like pgvector in Postgres) to perform billions of comparisons per second.

## 5. Specialist's Insight: Selecting your Stack

Don't over-engineer. While specialized vector DBs (like Pinecone or Weaviate) are powerful, a well-tuned **Postgres + pgvector** setup (like the one powering Theorycraft) provides the best balance of relational data integrity and high-performance vector search for 90% of enterprise use cases.

PDF Generation Mode

Please use your browser's "Print to PDF" feature. Margins and styling have been optimized for A4 paper.

Please use your browser's "Print to PDF" feature. Margins and styling have been optimized for A4 paper.